Multi-Level Reinforcement Learning for flow control

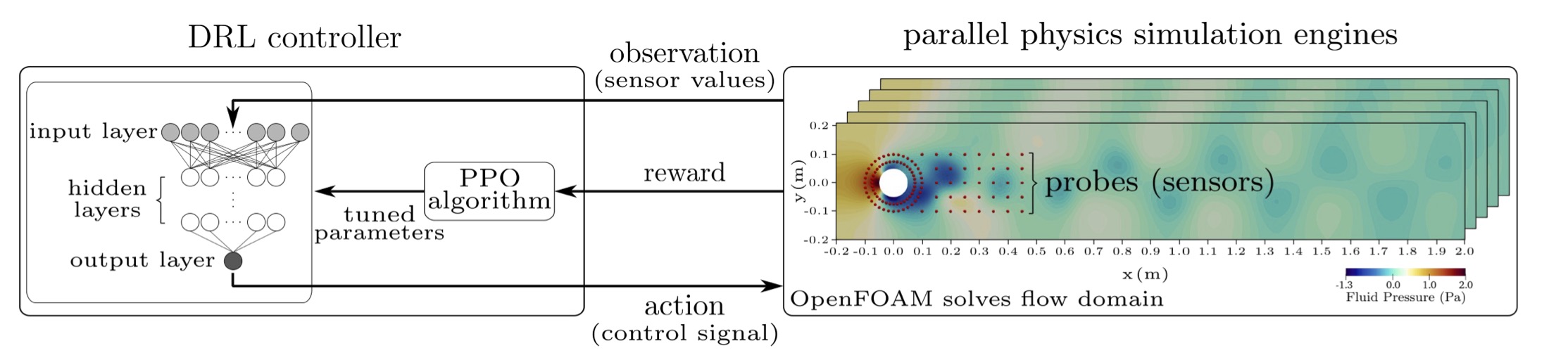

MLRL-Control is a UKRI funded project (Grant reference: EP/V048899/1) aiming to develop efficient algorithms for active flow control. Flow control is the process of targeted manipulation of fluid flow fields to accomplish a prescribed objective such as reducing drag. Flow control uses information from the flow (provided by sensors) to adapt to incoming perturbations and adjust to changing flow conditions. Despite its significance, general flow control is a largely unsolved mathematical problem appearing in many industries, including automotive, aerospace and environmental subsurface flow problems. In this project, Reinforcement Learning (RL) algorithms will be utilized to learn general flow control polices using reliable simulated flow environments.

Workpackages and tasks

- WP1: Basic formulation and test suite development

- WP2: Formulation and algorithmic development of multi-level RL algorithms

- WP3: Evaluation studies and software release

Project team at Heriot-Watt University (HWU)

- Principal Investigator: Ahmed H. Elsheikh

- Postdoctoral Research Associate: Mosayeb Shams

- Research Associate: Atish Dixit

Project Publications

- Mosayeb Shams, Ahmed H. Elsheikh, Gym-preCICE: Reinforcement learning environments for active flow control, SoftwareX, Volume 23, (2023), URL

- Atish Dixit, Ahmed H. Elsheikh, Robust Optimal Well Control using an Adaptive Multigrid Reinforcement Learning Framework, Mathematical Geosciences, Volume 55, 345–375 (2023), URL

- Atish Dixit, Ahmed H. Elsheikh, Stochastic optimal well control in subsurface reservoirs using reinforcement learning, Engineering Applications of Artificial Intelligence, Volume 114 (2022), URL

- Atish Dixit, Ahmed H. Elsheikh, A multilevel reinforcement learning framework for PDE based control, arXiv preprint (2022), URL